"external hive store on rds-external-store": "j-1MO3元XSXZ45V"Īfter your cluster shows up on the active list, Amazon EMR and Atlas are ready for operation.

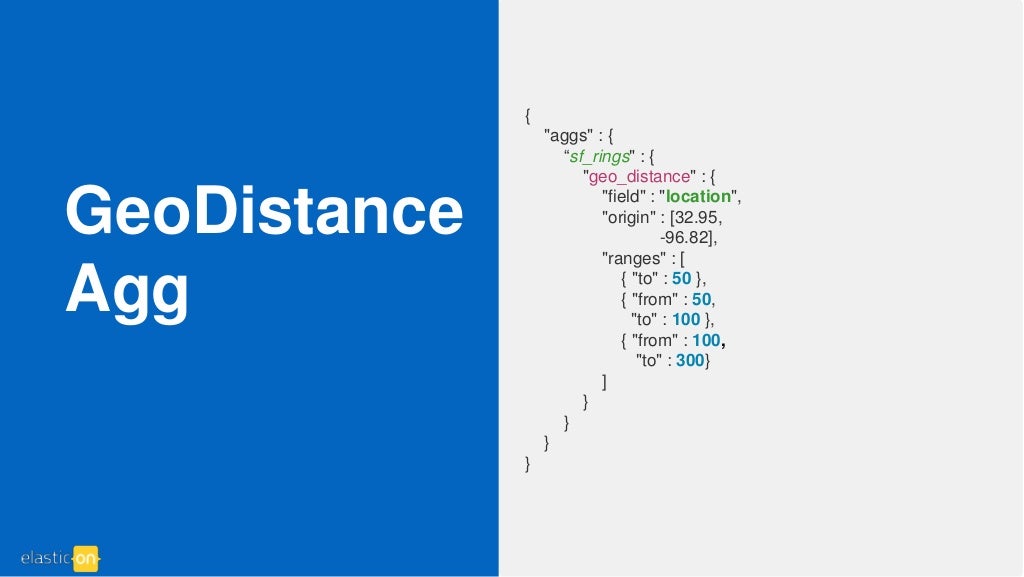

You have sufficient permissions to create S3 buckets and Amazon EMR clusters in the default AWS Region configured in the AWS CLI.Īws emr list-clusters -active | jq '.You have a default key pair, VPC, and subnet in the AWS Region where you plan to deploy your cluster.You have a working local copy of the AWS CLI package configured, with access and secret keys.The automation shell script assumes the following: It also executes a step in which a script located in an Amazon S3 bucket runs to install Apache Atlas under the /apache/atlas folder. This installation creates an Amazon EMR cluster with Hadoop, HBase, Hive, and Zookeeper. The steps following guide you through the installation of Atlas on Amazon EMR by using the AWS CLI. Launch an Amazon EMR cluster with Apache Atlas using the AWS CLI Discover metadata using the Atlas domain-specific languageġa.Using Hue, populate external Hive tables.Launch an Amazon EMR cluster using the AWS CLI or AWS CloudFormation.To demonstrate the functionality of Apache Atlas, we do the following in this post: The following diagram illustrates the architecture of our solution. A sample configuration file for the Hive service to reference an external RDS Hive metastore can be found in the Amazon EMR documentation. For the Hive metastore to persist across multiple Amazon EMR clusters, you should use an external Amazon RDS or Amazon Aurora database to contain the metastore. This solution’s architecture supports both internal and external Hive tables. Both Solr and HBase are installed on the persistent Amazon EMR cluster as part of the Atlas installation. Apache Atlas uses Apache Solr for search functions and Apache HBase for storage. ArchitectureĪpache Atlas requires that you launch an Amazon EMR cluster with prerequisite applications such as Apache Hadoop, HBase, Hue, and Hive. Also, you can use this solution for cataloging for AWS Regions that don’t have AWS Glue. The scope of installation of Apache Atlas on Amazon EMR is merely what’s needed for the Hive metastore on Amazon EMR to provide capability for lineage, discovery, and classification. The Data Catalog can work with any application compatible with the Hive metastore.

AWS Glue Data Catalog integrates with Amazon EMR, and also Amazon RDS, Amazon Redshift, Redshift Spectrum, and Amazon Athena. The AWS Glue Data Catalog provides a unified metadata repository across a variety of data sources and data formats. To read more about Atlas and its features, see the Atlas website. After you successfully set up Atlas, it uses a native tool to import Hive tables and analyze the data to present data lineage intuitively to the end users. It also provides features to search for key elements and their business definition.Īmong all the features that Apache Atlas offers, the core feature of our interest in this post is the Apache Hive metadata management and data lineage. Atlas supports classification of data, including storage lineage, which depicts how data has evolved. Atlas provides open metadata management and governance capabilities for organizations to build a catalog of their data assets. If you use Amazon EMR, you can choose from a defined set of applications or choose your own from a list.Īpache Atlas is an enterprise-scale data governance and metadata framework for Hadoop. Introduction to Amazon EMR and Apache AtlasĪmazon EMR is a managed service that simplifies the implementation of big data frameworks such as Apache Hadoop and Spark. As part of this, you can use a domain-specific language (DSL) in Atlas to search the metadata. You can use this setup to dynamically classify data and view the lineage of data as it moves through various processes. This post walks you through how Apache Atlas installed on Amazon EMR can provide capability for doing this. The use of metadata, cataloging, and data lineage is key for effective use of the lake. Many organizations use a data lake as a single repository to store data that is in various formats and belongs to a business entity of the organization. With the ever-evolving and growing role of data in today’s world, data governance is an essential aspect of effective data management. The code repositories used in this blog have been reviewed and updated to fix the solution IndexWriterConfig indexWriterConfig = new IndexWriterConfig(.This blog post was last reviewed and updated April, 2022. Public void BuildCompleteIndex(IEnumerable documents) _indexDirectory = new SimpleFSDirectory(new System.IO.DirectoryInfo(_indexPath)) Private Analyzer _analyzer = new ArabicAnalyzer(.LUCENE_48) I'm trying to compress the index size as much as possible, Any help please?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed